In recent posts, we have written about computer technology, systems, and protocols. It’s now time to talk about synthesis and sound – after all, at Nonlinear Labs we have set out to build musical instruments.

Although our instruments are self-contained hardware units, the sound is defined and generated by software running on internal processors.

As specified in our TCD protocol, all time-variant control signals are generated by a an ARM microprocessor that is closely connected to the player interface (in our first instrument the keyboard, wheels, and pedals). The audio signals are generated by a TCD-controlled synth engine that is implemented in Reaktor running on an embedded PC.

The synth engine is specially tailored to our hardware, but large parts of it can be developed and tested in the Reaktor application on standard computers (Mac OSX and Windows). This way we benefit from many years of experience creating Reaktor instruments, as well as the support from excellent Reaktor instrument builders.

Goals for Nonlinear Labs Synthesizers

In recent years, much of the musical instrument industry has moved away from manufacturing durable instruments that are made for practicing musicians. Instead, the market is flooded with pre-programmable sequence-based systems which often don’t require any performer at all, other than for pushing a “start” button, turning some knobs or selecting and triggering sample clips. Our aim at Nonlinear Labs is to give musicians an alternative to this “automated music” and to provide them with true, musically playable instruments. Our principal objectives are to create:

Truly playable instruments:

Our central focus is on both musical expressivity and real-time playability, not pre-programmed “sequencing”

Wide-ranging dynamic sonic palettes:

We aim to make instruments capable of both dramatic changes and very fine nuances

Organic character:

Instead of trying to imitate natural instruments, we aim to create nature-like qualities resulting from the complex behavior of synthesis structures

Fresh and unique sounds:

We are not interested in emulating vintage gear, but in moving the culture of sound forward

Instruments with vast sound design potential:

Nonlinear Labs synthesizers will not only be performance instruments, but also highly versatile and powerful sound design tools

Products with longevity:

We want our instruments to stay interesting and inspiring for many years to come

Open systems:

We value free exchange of knowledge and evolutionary development practices

Means of Achieving our Goals

First of all, everything has to be real-time! This means that all signals are generated and processed at the moment when the musician touches the instrument. Such an engine cannot be based on sampled or otherwise pre-defined complex waveforms. In our first synth engine we use sine wave oscillators, which are shaped or modulated by parameters which are under the real-time control of the live musician.

The oscillator waveforms can be modified by phase modulation (often referred to as frequency modulation), wave shaping, filtering, and mixing for producing a rich spectrum. We also experiment a great deal with feedback loops because they can give the instrument an organic and complex behavior, similar to acoustic instruments.

Complexity of sound character does not mean complex user programming; since we focus on expressive real-time playing and full control by the musician, you will not find complex envelopes, LFOs, or sequencers in our instruments. Instead we focus on how key velocity (how hard you hit the keys) influences sound parameters, because this is the principal aspect of expressive keyboard playing. This also includes release velocity – the speed at which a key is let go also affects the sound. And we are also creating flexible and effective control assignments for a number of controls, such as: pedals, wheels, levers, pressure sensors, and touch stripes.

In addition, morphing between two presets or between the current settings and a preset will be the easiest and most intuitive control over a large variety of sounds.

A traditional synthesizer typically contains modulation sources like envelopes and LFOs. For our TCD approach we split the instrument into two main components: The pure audio engine realized in Reaktor and the modulation sources defined by the firmware of the ARM micro controller. In the Reaktor structure we add a renderer that converts TCD messages into continuous audio control signals.

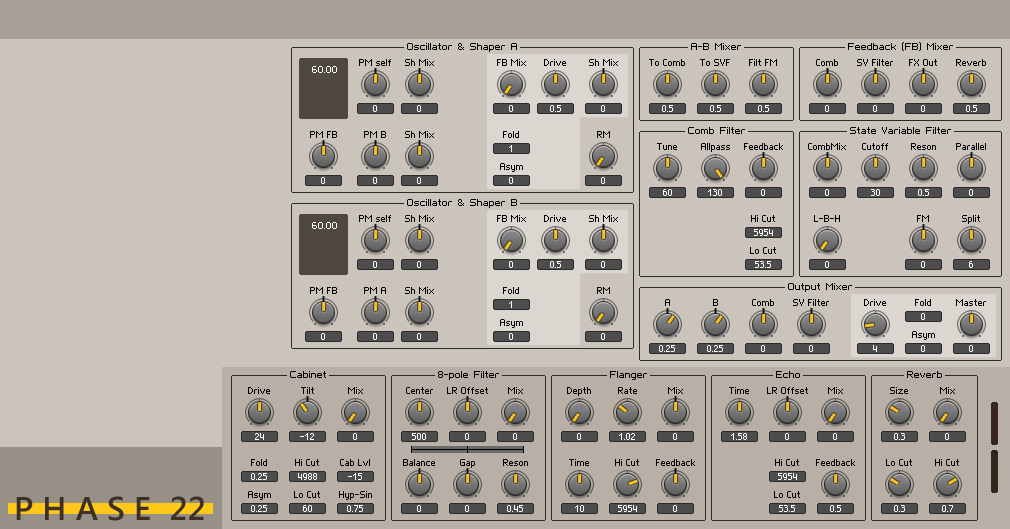

The following two pictures show how the parameters of our current Reaktor prototype are split between the TCD generation and the audio engine. (Please note that our instruments will have hardware user interfaces.) The first view shows the audio engine parameters:

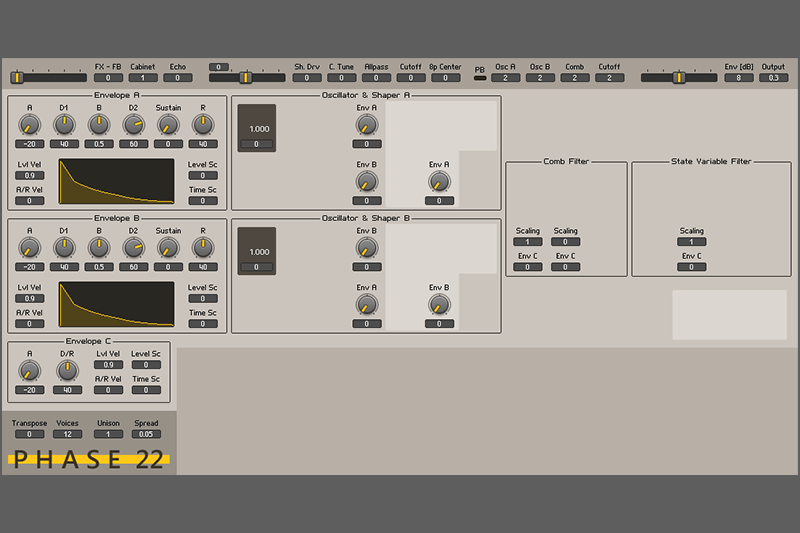

In the second view the parameters of the TCD generation are visible:

We not only split up the instrument in TCD generator and audio engine but also gain new possibilities which go beyond the classic MIDI protocol. By controlling the parameters of each voice separately with TCD we attain:

- control over voice allocation

- flexible and dynamic unison modes

- individual expression within played notes

- extended sustain/damper pedal functions

- sound morphing, switching, and crossfading per voice

The high resolution of TCD (compared to MIDI) provides:

- high dynamic ranges with a great amount of detail (no noticeable steps)

- very fine resolution for pedals, wheels, and other physical inputs

Evolutionary Instrument Technology

Because our audio and TCD engines are software-based, they are open for future updates. The hardware user interface design lets you replace one synth by another with a completely different set of parameters. A new engine can be installed by loading a Reaktor Ensemble and a microprocessor firmware file from a USB flash drive. This way we can:

- make updates involving current synth engine improvements

- provide a variety of different engines (only one can be installed at a time)

- allow for customization: developers can modify our designs or even create their own synth engines. We will support them with an API.

At the moment our prototype synthesizer is the Reaktor instrument Phase 22. You can read more about Phase 22 here. In addition to the Phase 22 engine, we are already working on a second instrument concept with delay-based resonators (comb filters) as the main components. For future projects we are considering components from waveguide, modal, additive, subtractive, phase and frequency modulation, wave-shaping, and phase-distortion synthesis, as well as pure numerical signal generation.

We feel that since we put our focus on digital synthesis, we have a virtually unlimited field of possibilities. After all, there is so much more to synthesis than virtual analog, wavetables, and samples!